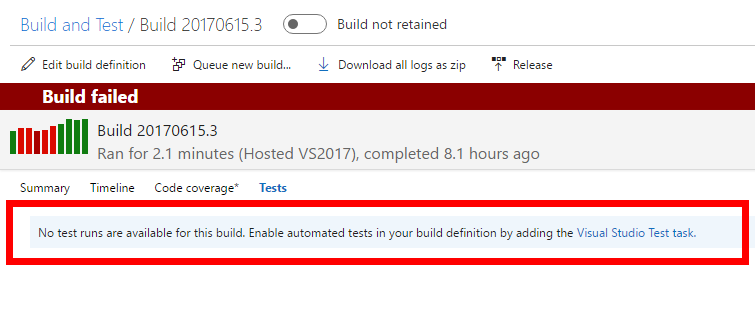

Following my previous post, I’m building Asp.Net Core web application and I’m running my tests in XUnit. Default VSTS template for Asp.Net Core application runs the tests but it does not publish any results of test execution, so going into Tests results panel can be sad:

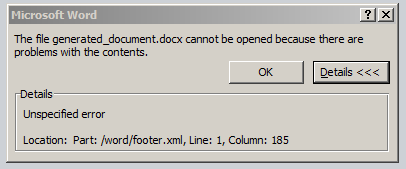

And even if you have a task that publishes test results after dotnet test, you will not get far.

As it turns out command dotnet test does not publish any xml files with tests execution results. That was a puzzle for me.

Luckily there were good instructions on XUnit page that explained how to do XUnit with Dotnet Core properly. In *test.csproj file you need to add basically the following stuff:

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<TargetFramework>netcoreapp1.1</TargetFramework>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="xunit" Version="2.3.0-beta2-build3683" />

<DotNetCliToolReference Include="dotnet-xunit" Version="2.3.0-beta2-build3683" />

</ItemGroup>

</Project>