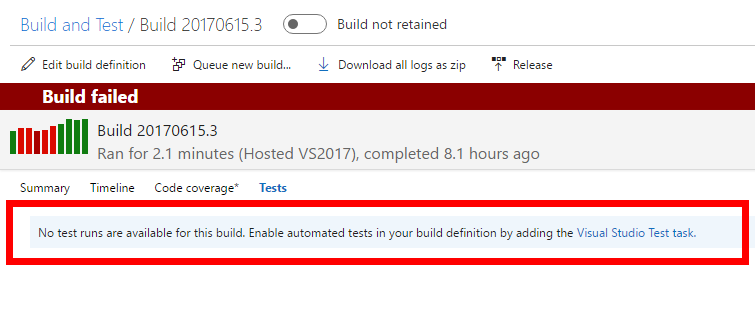

Last night I run into a problem with GitVersion and dotnet pack command while running my builds on VSTS

The probem came up when I tried to specify a version number I’d like the package to have. There are answers on SO telling me how to do it and I’ve used it in the past. But this time it did not work for some reason, so I had to investigate.

Here is the way it worked for me.

To begin, edit your *.csproj file and make sure your package information is there:

<PropertyGroup>

<OutputType>Library</OutputType>

<PackageId>MyPackageNaem</PackageId>

<Authors>AuthorName</Authors>

<Description>Some description</Description>

<PackageRequireLicenseAcceptance>false</PackageRequireLicenseAcceptance>

<PackageReleaseNotes>This is a first release</PackageReleaseNotes>

<Copyright>Copyright 2017 (c) Trailmax. All rights reserved.</Copyright>

<GenerateAssemblyInfo>false</GenerateAssemblyInfo>

</PropertyGroup>